Publications

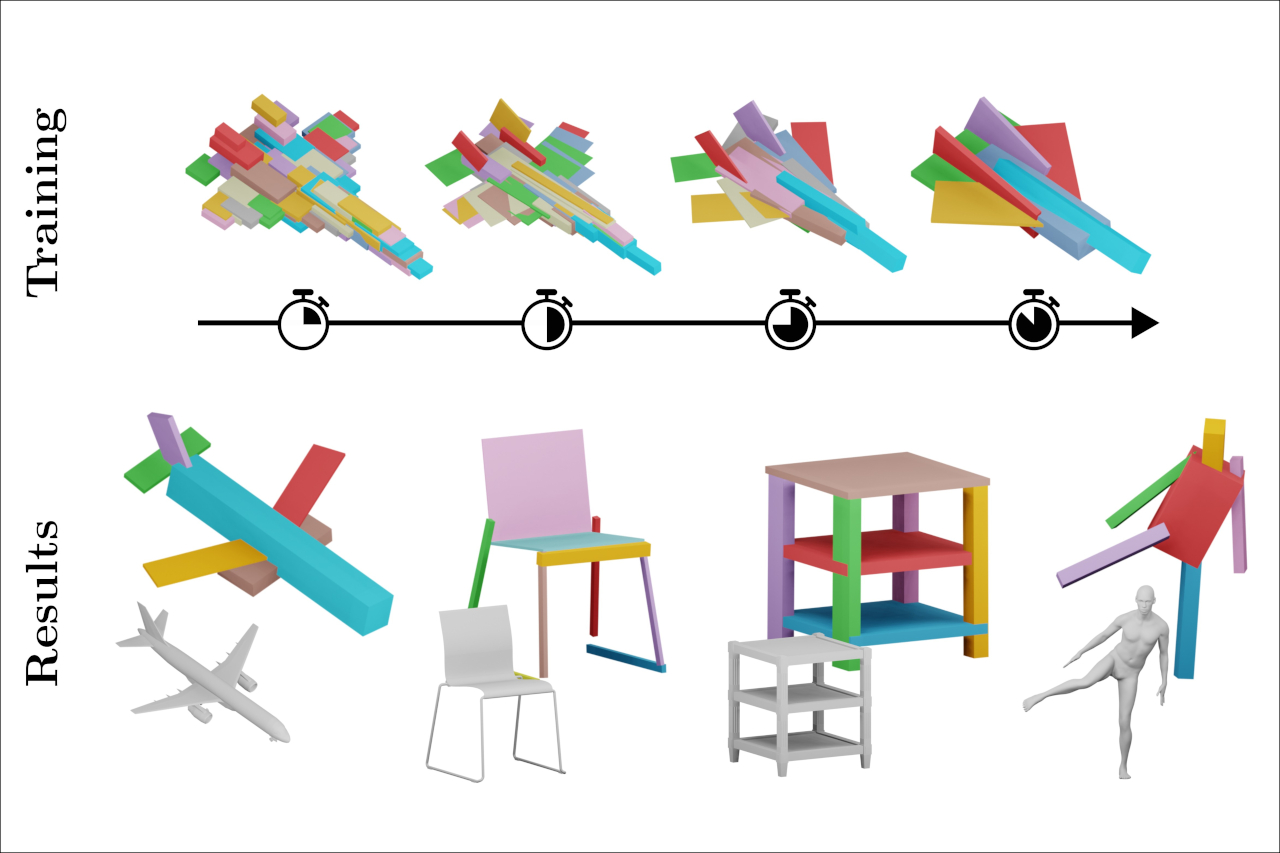

Self-supervised Learning of Fine-to-Coarse Cuboid Shape Abstraction

The abstraction of 3D objects with simple geometric primitives like cuboids allows us to infer structural information from complex geometry. It is important for 3D shape understanding, structural analysis and geometric modeling. We introduce a novel fine-to-coarse self-supervised learning approach to abstract collections of 3D shapes. Our architectural design allows us to reduce the number of primitives from hundreds (fine reconstruction) to only a few (coarse abstraction) during training. This allows our network to optimize the reconstruction error and adhere to a user-specified number of primitives per shape while simultaneously learning a consistent structure across the whole collection of data. We achieve this through our abstraction loss formulation which increasingly penalizes redundant primitives. Furthermore, we introduce a reconstruction loss formulation to account not only for surface approximation but also volume preservation. Combining both contributions allows us to represent 3D shapes more precisely with fewer cuboid primitives than previous work. We evaluate our method on collections of man-made and humanoid shapes comparing with previous state-of-the-art learning methods on commonly used benchmarks. Our results confirm an improvement over previous cuboid-based shape abstraction techniques. Furthermore, we demonstrate our cuboid abstraction in downstream tasks like clustering, retrieval, and partial symmetry detection

@article{kobsik2026cuboid,

title={Self-supervised Learning of Fine-to-Coarse Cuboid Shape Abstraction},

author={Kobsik, Gregor and Henkel, Morten and He, Yanjiang and Czech, Victor and Elsner, Tim and Lim, Isaak and Kobbelt, Leif},

year={2026},

journal={Computer Graphics Forum},

volume={45},

number={2},

}

Embedding Optimization of Layouts via Distortion Minimization

Given an embedding of a layout in the surface of a target mesh, we consider the problem of optimizing the embedding geometrically. Layout embeddings partition the surface into multiple disk-like patches, making them particularly useful for parametrization and remeshing tasks, such as quad-remeshing, since these problems can then be solved on simpler subdomains. Existing methods can either not guarantee to maintain patch connectivity, limiting downstream applications, or are specialized for quad layout optimization, relying on principal curvature information. We propose a framework that balances per-patch distortion minimization with strict connectivity control through an explicit representation. By inserting additional nodes along layout arcs, they can be embedded as piecewise geodesic curves on the surface. This sampling of arcs provides additional flexibility where required, enabling joint optimization of both node positions and arc embeddings. Our representation naturally supports a multi-resolution workflow: optimization on coarse meshes can be prolongated to high-resolution inputs. We demonstrate its effectiveness in applications requiring connectivity-preserving, low-distortion surface layouts.

@article{heuschling2026layoutOpt,

title={Embedding Optimization of Layouts via Distortion Minimization},

author={Heuschling, Alexandra and Lim, Isaak and Kobbelt, Leif},

year={2026},

journal={Computer Graphics Forum},

volume={45},

number={2},

}

SPLOCIS - Extending SPLOMs to a Scatterplot Cube with Interactable Shadows for Immersive Analysis in Virtual Reality

In data analysis, scatterplots serve as an initial tool for exploring the relationships between two or three attributes. While scatterplot matrices (SPLOMs) display every attribute combination through numerous 2D scatterplots to show a concise overview of a multivariate dataset, this approach is not directly suitable for 3D scatterplots due to visual clutter. Since research has shown that immersive virtual environments can enhance data analysis compared to traditional 2D desktop setups - especially for spatial analysis tasks - we propose an interactive system, called SPLOCIS, that makes use of virtual reality to enable users to interactively filter and select 3D scatterplots from all possible attribute combinations. Our user study, combining both qualitative and quantitative results, demonstrates that SPLOCIS is a particularly novel and stimulating approach to work with multivariate data in immersive environments. It enables solving classic data exploration tasks in an efficient and accurate way, while not imposing unexpectedly high task loads. Moreover, our findings provide promising suggestions for further developments.

@INPROCEEDINGS{11457517,

author={Derksen, Melanie and Dieke, Viktoria and Kuhlen, Torsten and Botsch, Mario and Weissker, Tim},

booktitle={2026 IEEE Conference on Virtual Reality and 3D User Interfaces (VR)},

title={SPLOCIS – Extending SPLOMs to a Scatterplot Cube with Interactable Shadows for Immersive Analysis in Virtual Reality},

year={2026},

volume={},

number={},

pages={55-65},

keywords={Projectiles;Weapons;Radio broadcasting;Frequency modulation;Filtering;Filters;Feedback;Circuits;Brushes;Negative feedback;Virtual reality;3D user interfaces;Head-mounted display;Immersive analytics;Scatterplot;Scatterplot matrix},

doi={10.1109/VR67842.2026.00029}}

Previous Year (2025)